StreamAnalytics is a key componant if you plan to do an analytics or IoT solution in Azure. It is a really powerful resource once you get it configured. However auomating its deployment is a topic that isn’t covered alot and there are currently zero templates in the Azure quick start github repo and there are only a handful powershell examples out there. Since I spin up demo environments on Event Hub and StreamAnalytics quite often, I was asked to write about how I do it.

Event Hub Provisioning

StreamAnalytics usually works with the Event Hub and there is an excellent article by Paolo Salvatori that I put in the references that show you how to do it. If you read the comments in his article, you’ll see how you can add Authorization rules also. In the script I provide, I’ve made that modification. With that script, you can create an Event Hub resource that you use with StreamAnalytics in no time.

The format of StreamAnalytics provisioning

Working with the powershell cmdlets for StreamAnalytics takes a while to get use to, because the Get-AzureRMStreamAnalyticsJob and New-AzureRMStreamAnalyticsJob basically works with a json template, but it’s not really a JSON Resource Template we know from ARM. Instead It is a custom format for StreamAnalytics. In an ideal world we could have exported the StreamAnalytics json config to file and then imported it when we need it, but it isn’t that simple. The JSON that is exported is stripped of sensitive information, like keys and passwords, and you need to provide that and update the json file before submitting it when you import the file. That’s not a simple task if you open several a JSON file with several hundred lines of code. To solve this, I wrote a script that would handle this task.

The objectives of my provisioning script are 1) lookup the keys, etc, that are missing in the json config file and 2) enable to set new datasources so you can move a StreamAnalytics solution between environments, like from Dev/Test/Prod.

What I don’t try to do is to change names of SQL tables, Storage Containers, etc, since that probably is part of the solution (you don’t change a SQL table name between test and prod).

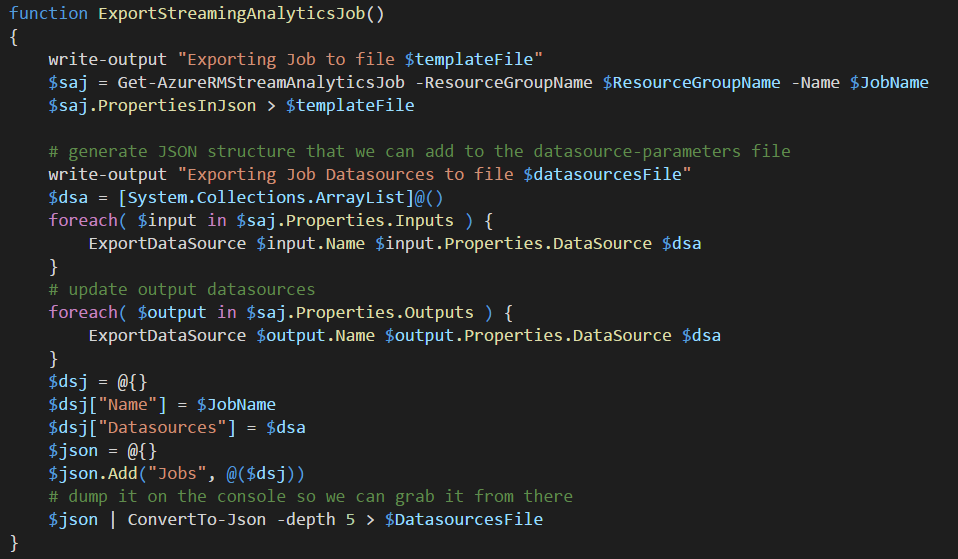

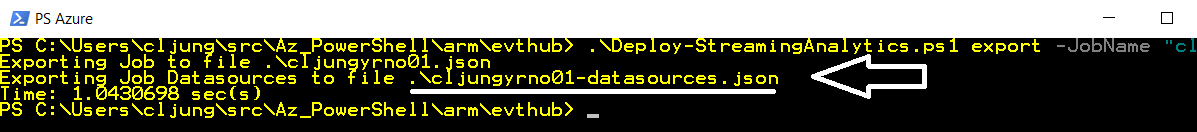

Exporting a StreamAnalytics config

This is really as easy as 1-2-3. All you need to do is to invoke Get-AzureRMStreamAnalyticsJob and pipe th output to a file. The PropertiesInJson comes in handy as it holds exactly the value of the config we can import later.

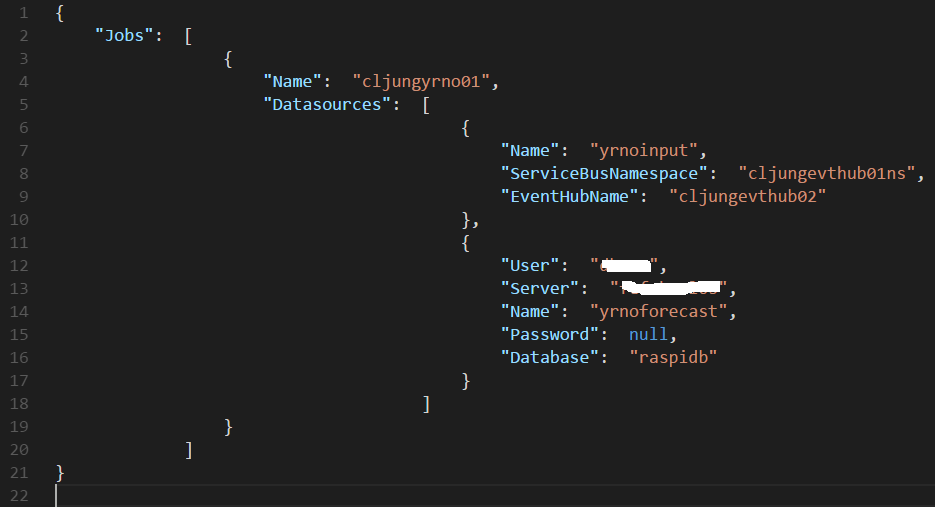

However, I did take it one step further and generate a “datasources” JSON file too, much like the separate Parameters file you can have in the ARM templates. The idea is that the “datasources” JSON file is the one you should change when moving between environments.

The name of the file is the JobName with “-datasources” appended to it. The format of this file is something I made up myself. It is possible to merge multiple Jobs datasource config into one single JSON file if you like to have it all in one place. The powershell script loads this file during import/create and matches the datasources by name, so if you want to change a namespace or a database server for your StreamAnalytics job, you can easily do it by editing this file and leave the real config JSON file untouched.

The format of the config JSON file

The format of the JSON file that is exported and contains the config is publically documented in MSDN (see refs below). It basically got four major sections for a Job, which are

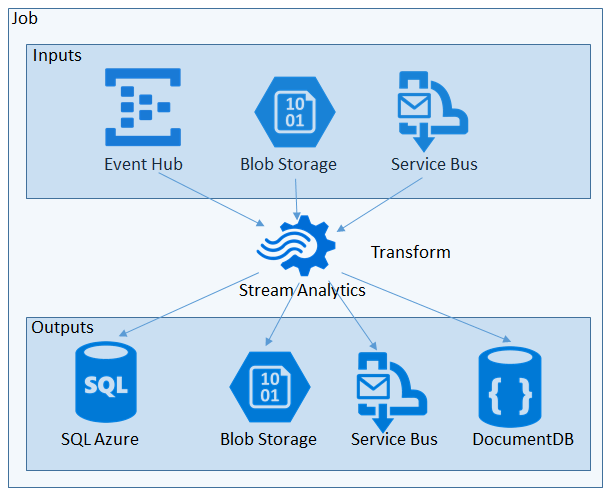

- Job – the actual StreamAnalytics resource you create is called a Job. The Job is a container for everything else and just holds the name and the location (Azure datacenter) where the job resource exists.

- Inputs – A job have 1..N inputs and each input has a datasource and serialization configuration. Datasource config is account names, keys, SQL Server and database names, etc. Serialization is about what dataformat the datasource works with, like CSV or JSON and if it’s UTF8, etc.

- Outputs – A job also have 1..N outputs which is exactly as input with datasource and serialization configurations. A job can have different number of inputs and outputs and a common scenario is to have 1 input and multiple outputs to split the data streaming through.

- Transformation – Data streaming through is processed via a transformation step that looks pretty much like a SQL statement with a SELECT * FROM inputs INTO outputs syntax. There is one and only one transformation step, so if you have multiple inputs the need to be joined in the SELECT step. This is also the place where you can invoke functions that exists in other places, like in Azure Machine Learning.

The powershell script I have developed in this blog post is mainly focused on updating the DataSource configuration in the Inputs and Outputs step, since that is what varies inbetween environments.Ie, it tries to connect the arrows in the above figure.

Powershell script

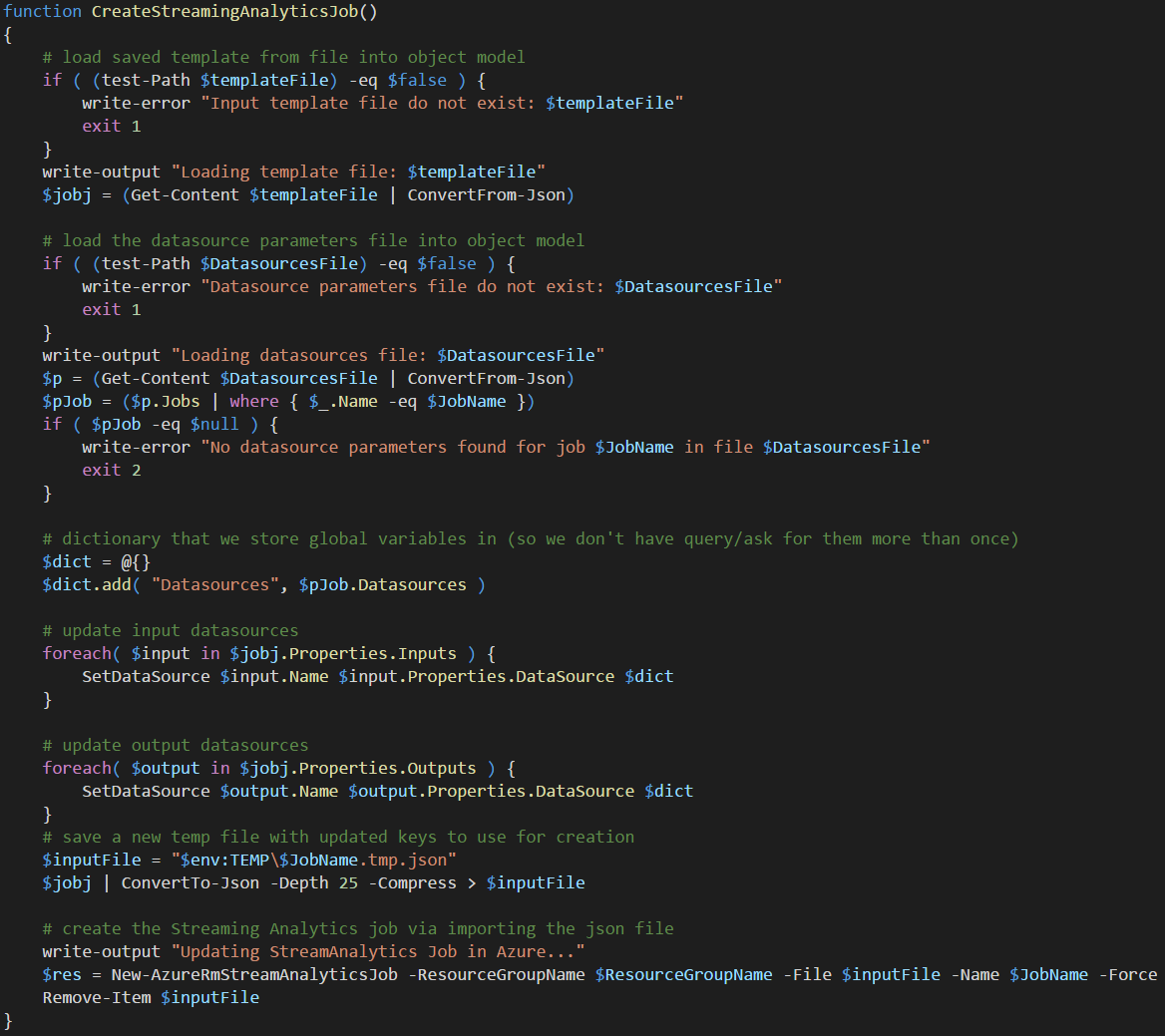

The Powershell script can do multiple operations, like export the JSON file, start/stop the StreamAnalytics Job and also create (import) or delete the job. Everything in the code is pretty simple except the create/import which requires some logic. The Create/Import logic is focused on fixing up the inputs/outputs DataSources. To do that, we load the datasources JSON file and pass that to a function that goes through each datasource in the inputs/outputs of the job and updates the JSON config.

Once the JSON values are updated for the DataSources, the script saves it to a temp file and invoke New-AzureRmStreamAnalyticsJob cmdlet to create/alter the resource in Azure. That sounds easy-piecy, but as you will see, supporting multiple datasources will make the script grow in lines of code.

Updating DataSources

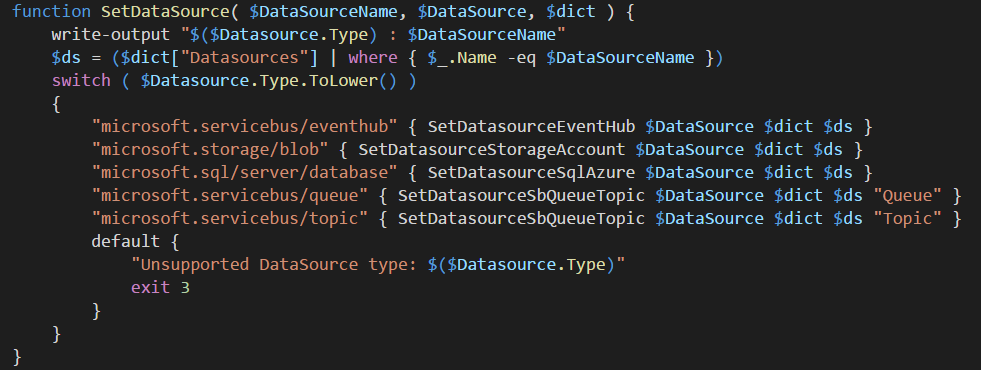

The JSON config contains a Type attribute for each DataSource which is in the same format as the Resource Template, so the job here is to handle each different datasource separatly. The code starts with a lookup of the datasources by name from the load JSON file containing datasources configuration.

Stream Analytics currenty supports input from Event Hub, Storage and IoT Hub and of those I skipped IoT Hub for now. Output supports a lot more and here I skipped Power BI, DocumentDB and a few more and just sticked to the basic ones.

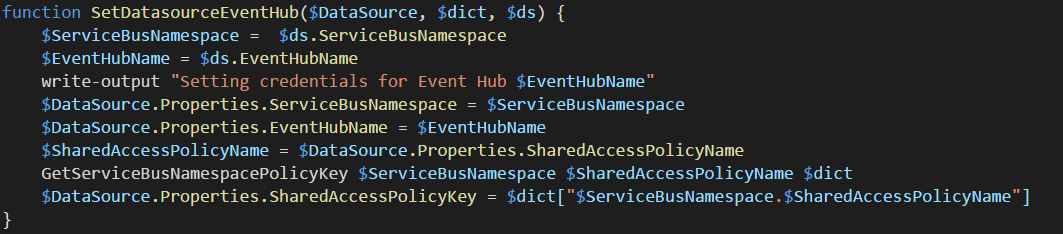

Since each DataSource is unique, it is handled by a different function. The Event Hub method is designed to set the namespace, EventHub Name and retrieve the SharedAccessPolicyKey, which is the item not exported by Azure and is also the key item that your IoT device, like a Raspberry Pi, needs to be able to ingest data into the event hub.

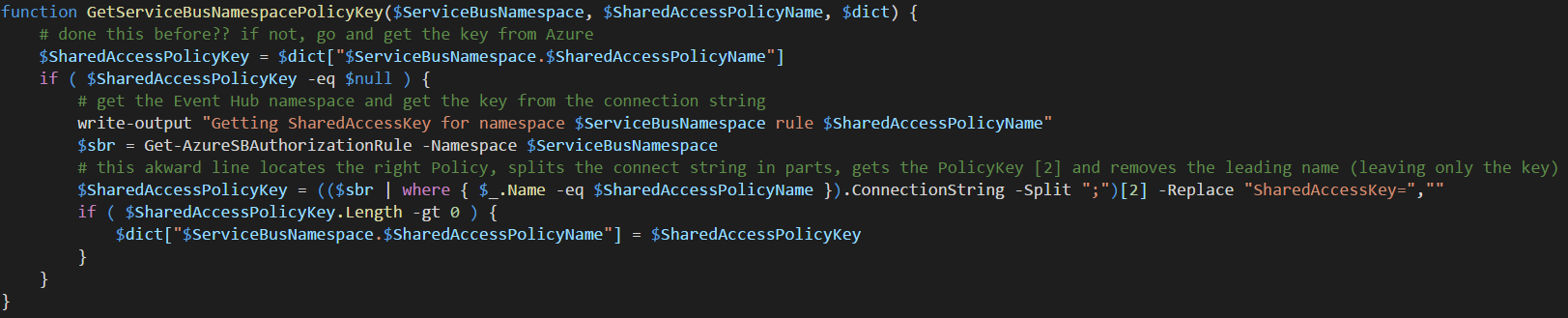

The process of retrieving the SharedAccessPolicyKey is about using existing APIs for Service Bus and getting the value from Azure. The code checks in the dictionary variable if we already have this info and uses Get-AzureSBAuthorizationRule cmdlet to get the key if we don’t have it in the dictionary. (The akward powershell line below is a one liner that finds the right rule in an array and “digs out” the key.)

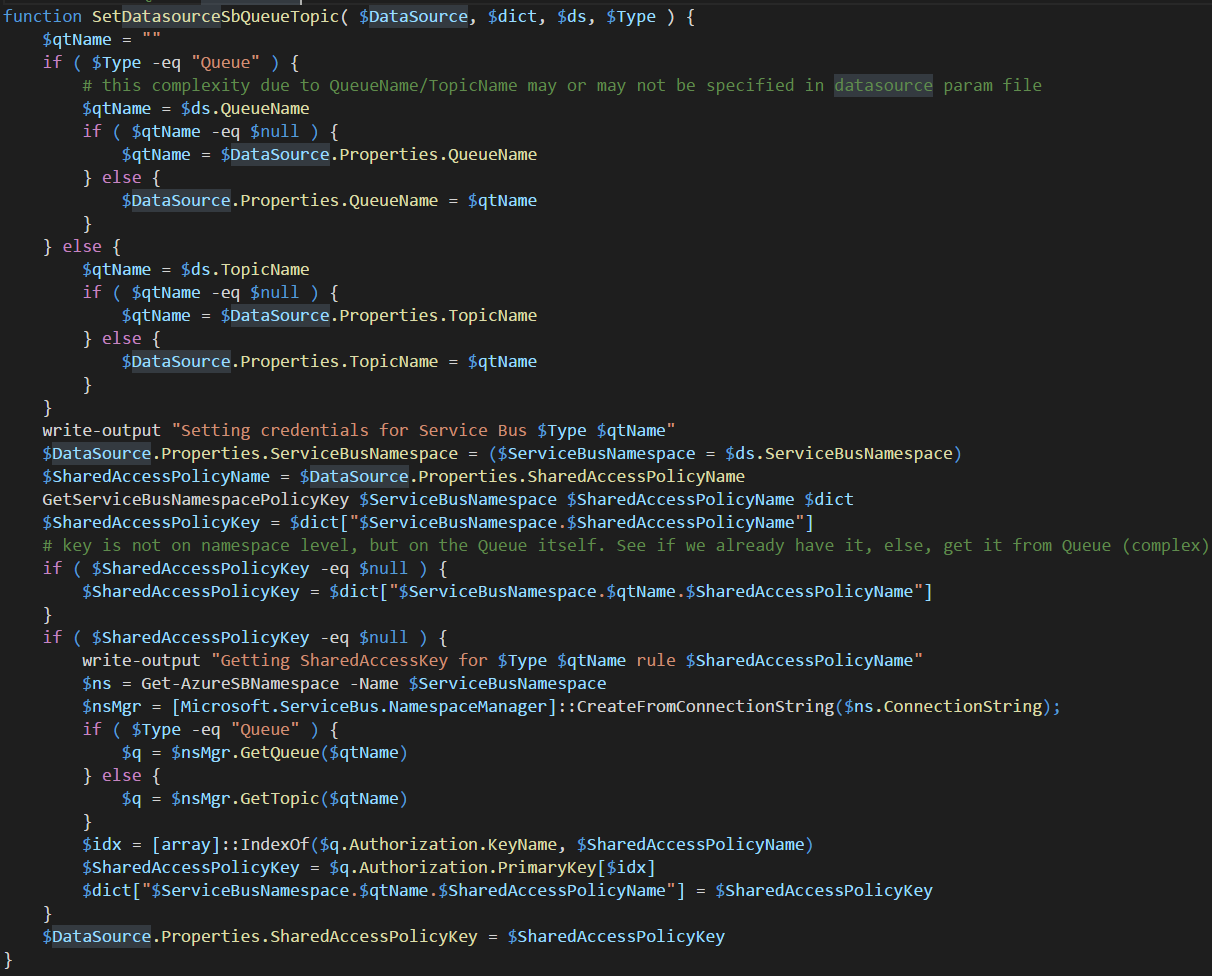

Updating the DataSource for a Service Bus Queue or Topic is a little more complicated since you can have a SAS rule on the namespace level or on the queue/topic level, so this function needs to do more lookups. I also made it possible that you can change queue/topic names when switching environments, so that’s why you have a lot of if’s in the beginning.

If the SAS key isn’t found on the namespace level, we need to go down on the Queue/Topic level. Here we are leaving the yellow brick road of native Powershell cmdlets support and need to use CLR interop with Microsoft.ServiceBus.dll to use the Namespacemanager. This means rewriting this to Azure CLI for Mac and/or Linux will be a pain.

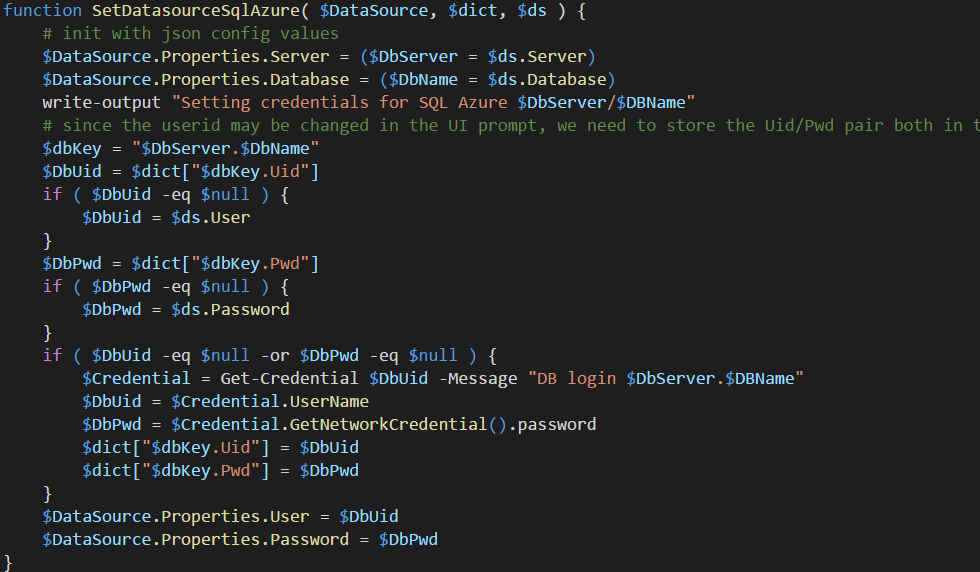

Handeling SQL Azure database as a datasource requires a different kind of complexity. You can’t query Azure in any way to get the password of the userid configured in the portal in any way. So the script can’t look it up which means it either needs to be in the JSON datasources file or we need to prompt for it.

Running the script

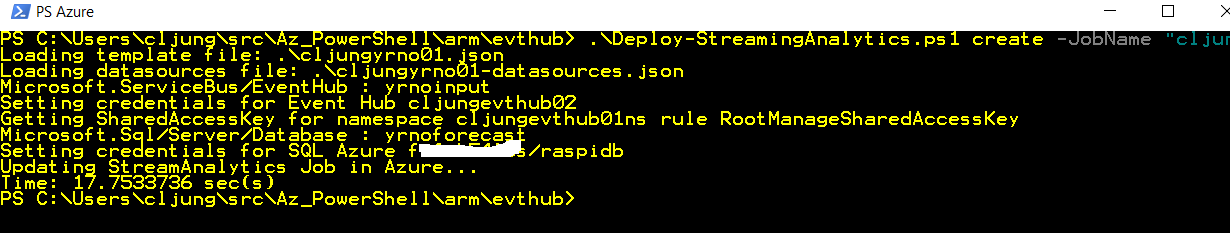

Running the script to create/import the job assumes you have run it once before to export the job as a JSON file (which you’ll need in the create/import phase). This means you design and develop the Stream Analytics Job in the portal and then use my script to export it to a JSON file (with my script). Once you have that you can recreate theStream Analytics job as easy as below.

Summary

Stream Analytics is powerfull and an integral part of Azure Analytics (and what is know being rebranded as Cortana Intellegence or the Intellegent Cloud). You will pick up pretty easily how to use it using the portal, but automating provisioning of it is a different story. Historically, Service Bus and Streaming Analytics aren’t best in class when it comes to Powershell and tooling support. It has become better and in this blog post I’ve showed you something you can use as a DevOps guy.

In comming posts I’ll tie it all together and show you a simulated IoT agent you can run on your Raspberry Pi (or Ubuntu Azure VM if you don’t have a Raspberry Pi) and also how you integrate Machine Learning. When you realize that you can provision all of this in just minutes, you will start to see the power of the Public Cloud, such as Azure.

References

Creating en Event Hub namespace using PowerShell – Paolo Salvatori

https://blogs.msdn.microsoft.com/paolos/2014/12/01/how-to-create-a-service-bus-namespace-and-an-event-hub-using-a-powershell-script/

MSDN Documentation of StreamAnalytics JSON file

https://msdn.microsoft.com/library/dn834994.aspx

MSDN Documentation of SteamAnalytics Powershell cmdlets

https://msdn.microsoft.com/en-us/library/mt603479.aspx

Azure Documentation (that makes a level 100 effort explaining this)

https://azure.microsoft.com/en-us/documentation/articles/stream-analytics-monitor-and-manage-jobs-use-powershell/

StreamAnalytics – Getting Started documentation

https://azure.microsoft.com/en-us/documentation/articles/stream-analytics-get-started/

Source Code

Powershell script can be downloaded here

https://github.com/cljung/az-streaming-analytics

How to use it:

- Design your StreamAnalytics job in the Azure portal

- export the config via

.\Deploy-StreamingAnalytics.ps1 export -JobName <my-name> - delete the StreamAnalytics job via

.\Deploy-StreamingAnalytics.ps1 stop -JobName <my-name>

.\Deploy-StreamingAnalytics.ps1 delete -JobName <my-name> - Possibly edit the <my-name>-datasources.json file

- (re)Create the StreamAnalytics job via

.\Deploy-StreamingAnalytics.ps1 create -JobName <my-name>