A customer of mine was faced with the situation of using the ftp protocol for data ingestion and having data in Azure Blob Storage that could be fronted with CDN. But since Azure Blob Storage doesn’t support the ftp protocol we had to find a solution. Keep in mind that changing data ingres to use the Storage REST APIs was out of question. The ftp protocol was a nonnegotioable requirement.

This meant deploying an ftp server on Azure IaaS VMs, which is possible and not rocket science. However, the data ingress volume was going to be huge, and I mean HUGE. 30 ftp clients running 24×7 uploads that were time critical. This means using the standard IIS FTP server and local file storage as a landing place with scripts on the VM syncing local storage to Blob Storage wasn’t an option. Also how would you get the commands like LIST/DEL/GET to work unless you have a local cache on the VM. How would each VM sync changes other VMs had done to this cache? I had to find another solution.

FTP to Azure Blob Storage Bridge

On Codeplex there is a sample called “FTP to Azure Blob Storage Bridge” from March 2011 that wraps a FTP server implementation in C# as a Cloud Service. I downloaded and upgraded in to the latest Azure SDK. I also modified it to be a C# Console Program instead of a Azure Worker Role, because I wanted to target Azure IaaS VMs and use Custom Script Extensions to setup the ftp server. The reason for this was that the customer may want to deploy an abitrary amount of Cloud Services each containing arbitrary of ftp server VMs around the globe. Having their DevOps team muck with cspkg, etc, was simply not an option. It had to be a powershell only deployment method that was as dead simple as possible.

Architecture

The architecture would be to have 2 or more ftp server VMs running where the servers would not work with local file storage or a file share, but rather directly towards Azure Blob Storage.

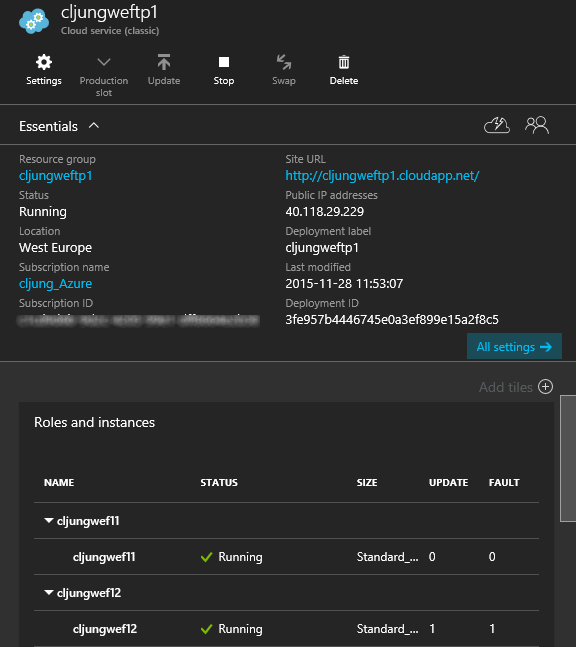

The VMs would be provisioned in the same Azure Cloud Service and within the same Availability Set so that we always have one machine running. In the below picture, you see that the two VMs have different update and fault domains which means that Azure will make sure that they are separated from physical hardware failure and that Azure don’t take them down both during maintenance.

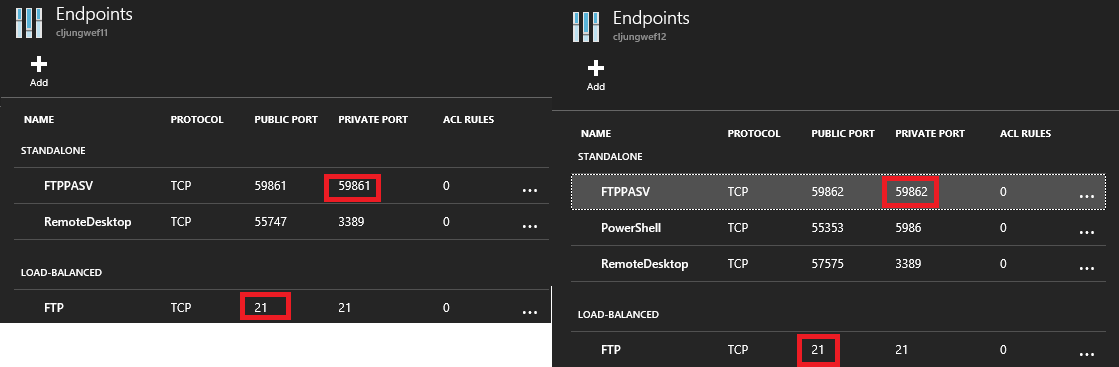

The ftp commands are send via port 21 and this port is load balanced by Azure so that ftp clients connecting will be routed on a round robin fashion across the available servers. The ftp servers will use passive mode for its data ports, which also works best in Azure (means that ftp client opens a second tcp connections to the server when data is about to be transfered for GET/PUT/LIST etc). The passive ports can’t load balanced since there is no way to make sure that we end up on the same VM with the passive port connection as we are connected over port 21. I e, we may send commands to one server and try to shuffle data to/from another server (who will be very suprised of that attempt and thus fail). The ftp protocol works so that when it’s time to open the data connection, the server sends the port number to the client on where it can be reached.

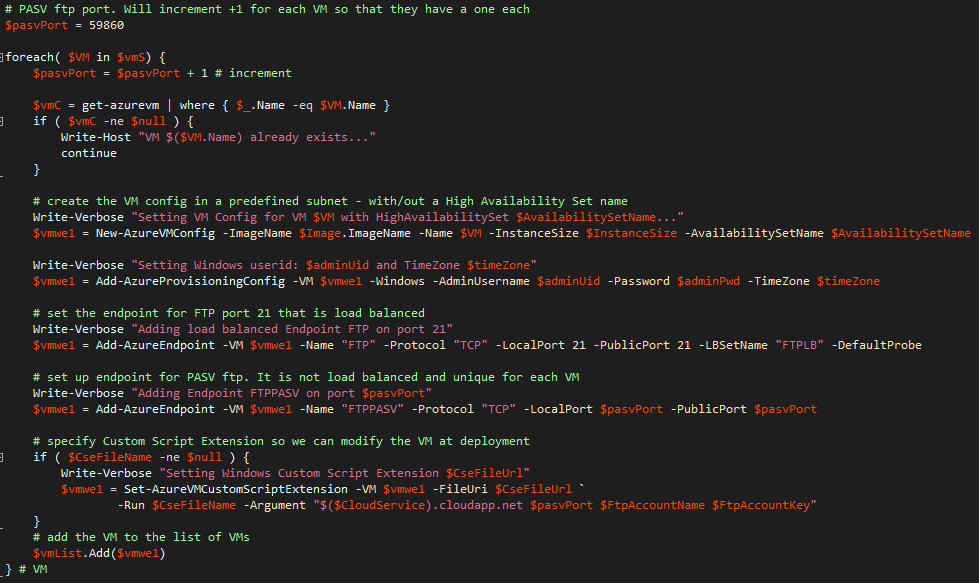

This means that the Passive Port can not be load balanced in Azure. Instead we have to give a unique port to every ftp server VM. In our case we let the first VM inside the same Cloud Service use passive port 59861, the second 59862 and so on. The powershell provisioning code just increments the port number as we create the endpoints. Each ftp server VM will then need to understand what passive port it was given, update that it its app.config file and open it for inbound traffic in the firewall.

Deployment

Deployment is based on using a standard Windows Server 2012 image from the Azure image gallary. The deloyment code is written with the Service Management APIs and is nothing exiting. the Add-AzureEndpoint for port 21 adds it to a LBSetName while the Add-AzureEndpoint for the passive port isn’t load balanced. The script also increments the passive port number for each VM in the Cloud Service. The more exiting part is what happend in the Set-AzureVMCustomScriptExtension (CSE) call. The FileUri parameter is an array of two files where the first is a powershell script and the second a zipfile. The powershell script is the installation script that will run inside the VM during it’s creation. It will perform the necessary tasks of installing the ftp server. The zipfile contains the binaries of the ftp server. Note also that the CSE is given four values in the -Argument parameter. It is the name of the cloud service (used to lookup it’s public VIP), the passive port and the Azure Storage Account Name and Key that we will use as ftp file store.

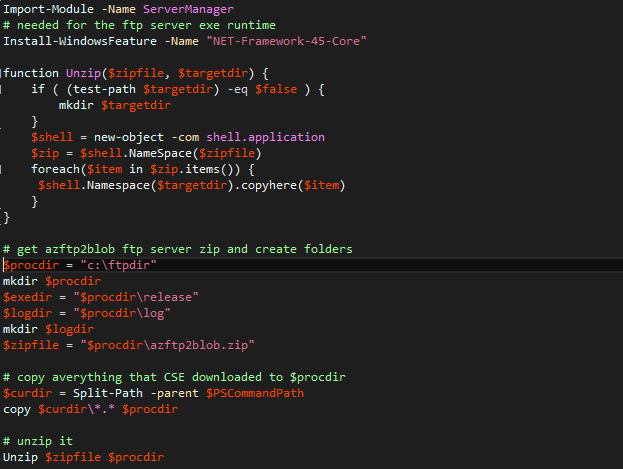

The installation script that the Set-AzureVMCustomScriptExtension invokes as part of the provisioning process starts with create the root folder for the ftp server and copy the zipfile from the path that the CSE exists in to the ftp folder we just created. Then it unzips the binaries.

Since I basically zipped the Release directory from my Visual Studio output, I will have the ftp server under C:\ftpdir\Release on my Azure VM.

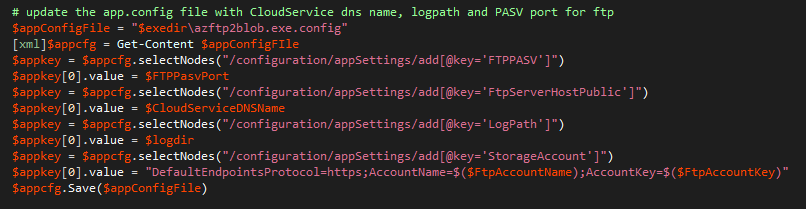

We need to modify the app.config file for each ftp server VM instance, since we have settings that are unique and that we can not preset when we create the zipfile. It’s especially the passive port, which comes from an incremented value of the deployment powershell script. And since this solution may be deployed in many different Cloud Services in the same or different Azure datacenters, we also update the Cloud Service DNS name and the Name and Key of the Storage Account. Since the app.config file is just an xml file, we can treat it as one and use powershells built in support for it with a little help of XPath.

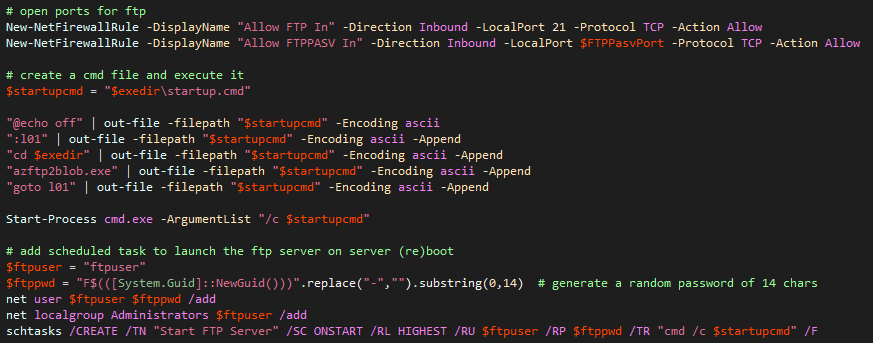

In order to start the ftp server, we first need to open the ftp ports in the firewall. The passive port is again passed as a parameter to the CSE script. Then we create a batch file on the fly that starts the ftp server. In case it crashes, the batch file has a goto so we restart it again.

The ftp server is started directly when the CSE script runs, but that doesn’t work for a server reboot, since the CSE script isn’t run then. In order to make the ftp server auto-start during a reboot, we create a scheduled task that executes the batch file at startup. We also create a special user with a random password that runs the ftp server.

Voila! Now we can create a highly available, load balanced ftp server that acts as a proxy over Azure Blob Storage. When its deployed, we can use popular ftp clients, like FileZilla, to connect to the file server and do all the tasks you can do with a ftp server.

Load testing

In the proof-of-concept scenario this was used for, the ftp server VMs created was put to work hard. They where used around the clock for 3+ days having constantly 10+ clients issuing commands to them. From the standpoint on how just this componant of the solution performed, I must say it passed with honours. Besides a violation of the RFC959-specification of how a ftp server should handle overwrites during upload, I only found 3 bugs in the ftp server implementation code and when they where those were fixed it really was a stable solution. The fear I had that working directly against Azure Blob Storage might be a bit slow and give unreliable performance trned out to be just fear and not facts.

Summary

Is it a recommended solution to use a custom coded ftp server on top of Azure Blob Storage? Of cause not! It introduces additional VMs that cost money and must be maintaned when you really should change the client code to use the Storage REST API directly. However, if you like in this case can’t change the client code and have to live with that requirement, this solution can be used since it is the smallest layer and fastest code I can think of to get the work done.

References

codeplex – FTP to Azure Blob Storage Bridge

https://ftp2azure.codeplex.com/

source code

https://github.com/cljung/azftp2blob

The changes I made to the source code at codeplex

- Changed the project to be a Console Application and not a Cloud Service Worker Role

- Changes required for getting the code to run with the latest Azure SDK

- Fixed three bugs

- Multiple adjacent forward slashes are treated differently on NTFS than Azure Blob Storage and commands like DEL would fail on this

- FtpConnectionObject.cs does a sMessage.Substring(…, sMessage.IndexOf(‘\r’)) that fails under stress due to IndexOf returning -1

- No try/catch on InitializeSocketHandler() in FtpServer class which may throw ObjectDisposedException under stress

- STOR command and RFC959 compliance

- Changed so that the list of accounts that can login is stored in a separat file rather than in app.config

- Made sure that userids are case insensitive during login

- Added rudimentary logging, like the IIS FTP loggging you can get. Note, this is not complete